Teleop & Data

Where great humanoid skills come from.

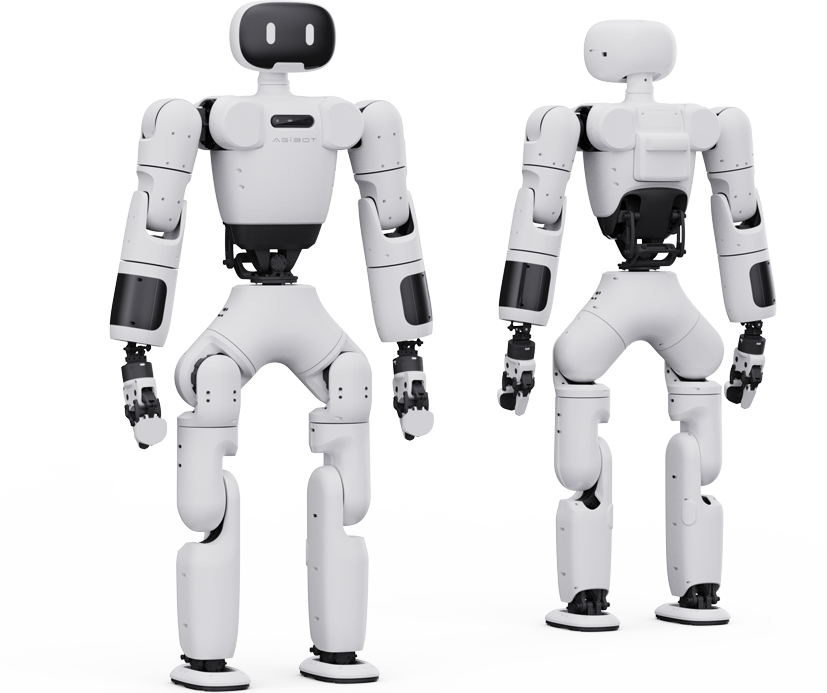

Foundation models like GO-1 are only as good as the demonstrations behind them. We help teams stand up the teleoperation rigs, data pipelines, and labeling workflows that turn AgiBot deployments into a continuously improving learner — without leaking operational data outside the perimeter.

- VR teleop kits configured against AgiBot's joint topology

- Demonstration capture, validation, and dataset curation

- On-prem or VPC training infra so data stays inside your network

- Sim-to-real loops in MuJoCo and Isaac Sim

- Evaluation harnesses your team can re-run across firmware updates